May 14, 2026

Hardcoded Trust, Translated

Alexander Semien, Head of Growth

Google's threat intel report this week landed as another scary AI headline. If you've been watching Mythos, Daybreak, Big Sleep, and last year's AI-vulnerability research demos (most of us in security have), it reads less like a thunderclap and more like Google's PR catching up to its own threat researchers.

On May 11, Google Threat Intelligence Group (GTIG) attributed a zero-day exploit to AI-assisted development. The vulnerability was a two-factor authentication bypass in a popular open-source sysadmin tool. The actual news is in-the-wild attribution, not capability novelty.

That said, the report does something useful that the rest of the discourse on AI-in-security has mostly skipped. It names a specific bug class, a semantic logic flaw, and explains why traditional tools cannot find it. That is the part worth slowing down on, because most of the coverage of this report has not actually translated the technical content.

In simpler terms, a hardcoded trust assumption is when a developer writes code that assumes some condition is true, without checking it. Often it is not a single line of bad code. It is a quiet disagreement between two parts of the same function.

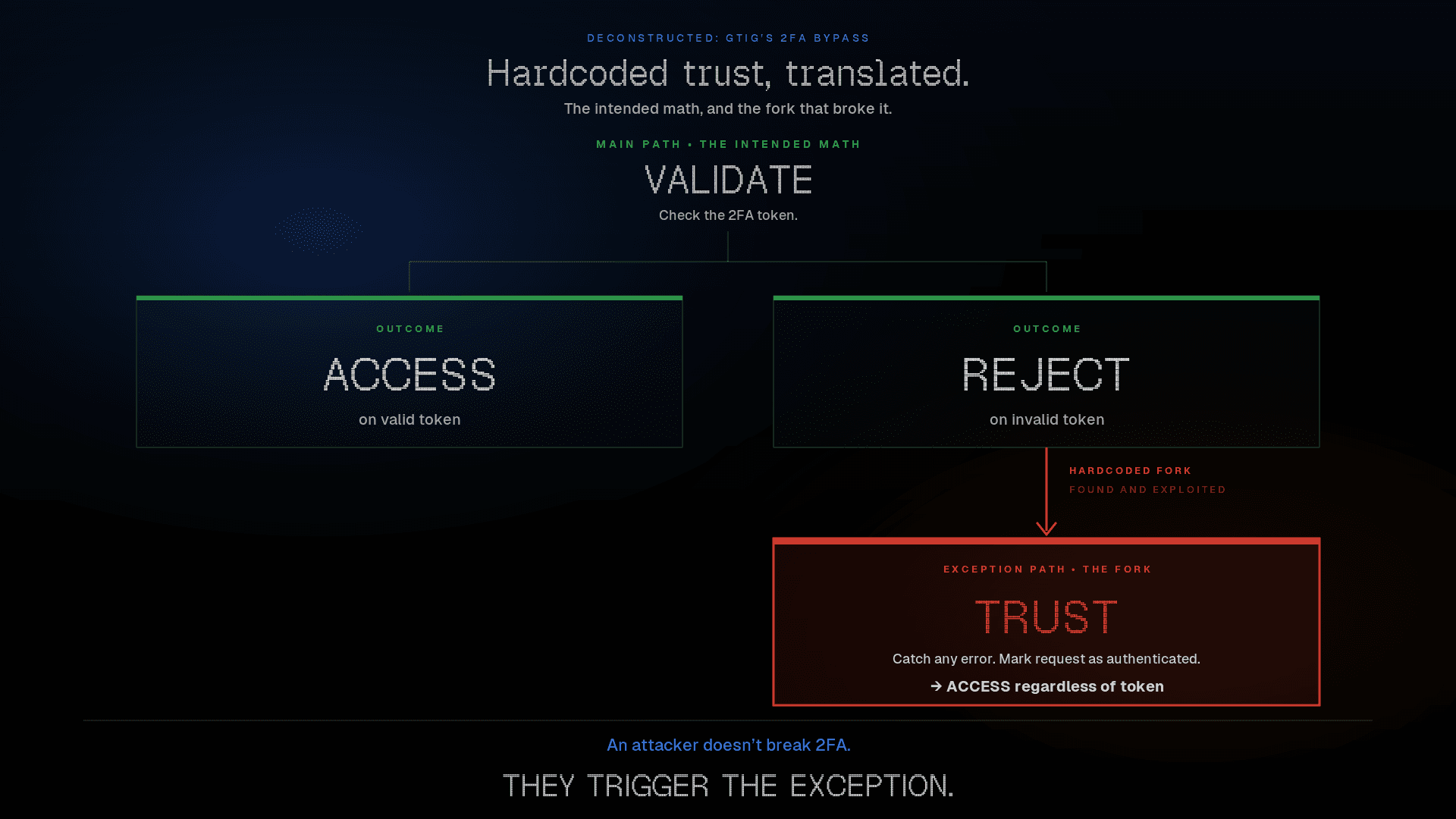

The GTIG bug was in a 2FA enforcement function in an admin tool. Two paths handled different scenarios:

Main path: checks the 2FA token, rejects the request if the token fails

Exception path: silently passes the request through as authenticated, even on a malformed token or an internal service error

The intent was don't lock out legitimate users if our 2FA service breaks. An attacker doesn't break 2FA. They trigger the exception.

GTIG notes one detail that sharpens the finding rather than softening it: the bypass requires valid user credentials to start. Stolen credentials are abundant, and 2FA is the layer that's supposed to stop attackers who already have them. AI just found a way around that layer.

The flaw isn't visible in any single function. It lives in the gap between two functions that each look reasonable in isolation. A senior auditor doing code review on either function alone would see nothing wrong and move on. The flaw only surfaces if you read both, hold the function's stated purpose against its actual behavior on every path, and ask whether they actually agree. That is days to weeks of focused attention, per codebase, with no guarantee of finding the bug.

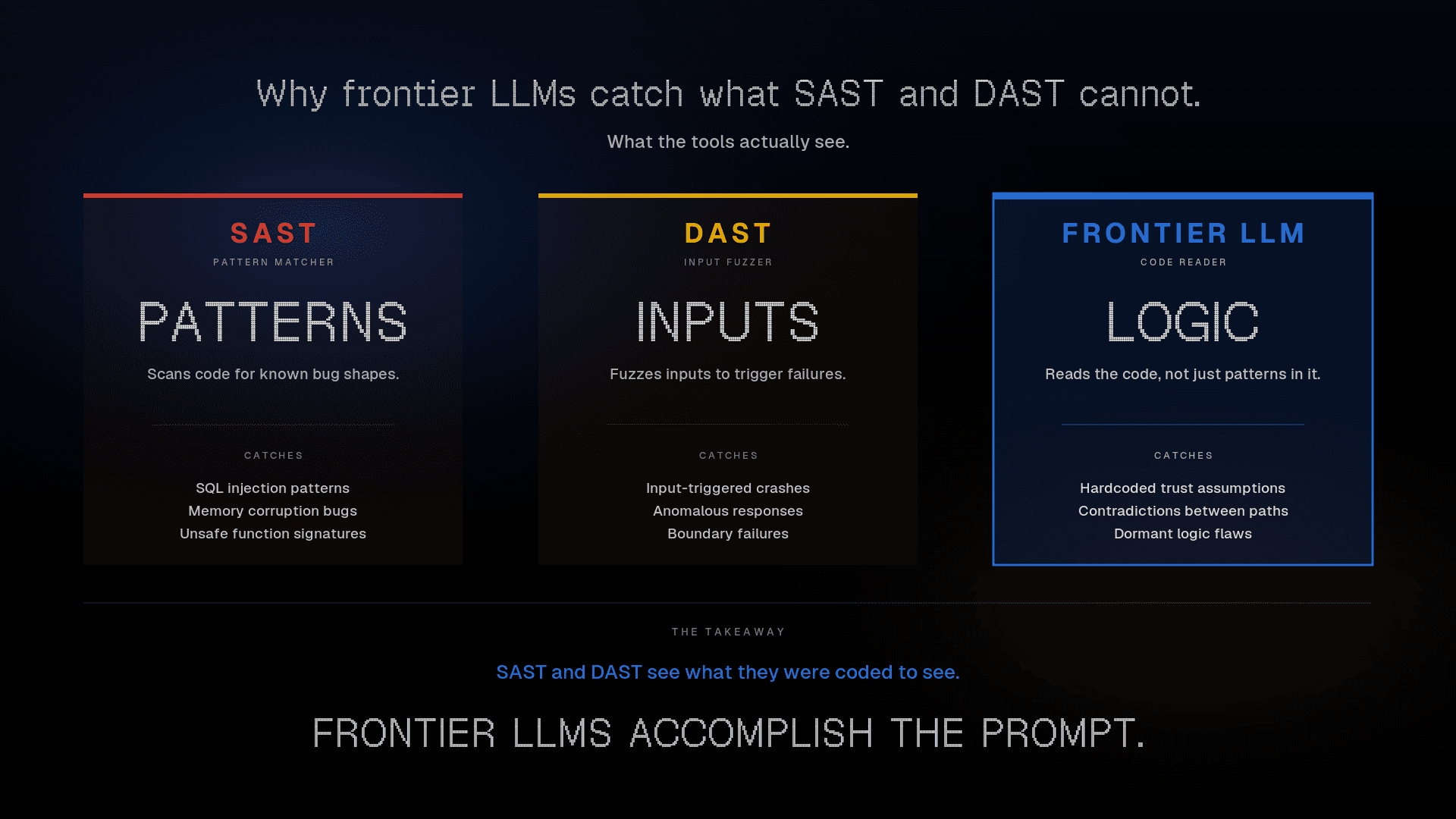

This is also why static and dynamic analysis tools were never going to catch it:

SAST scans for known bug shapes (SQL injection patterns, unbounded copies, unsafe function signatures)

DAST fuzzes inputs and watches for crashes or anomalous responses

Neither one reads the code for meaning. A semantic logic flaw isn't a shape; it is a contradiction between what a function does and what the function is supposed to do. Catching it requires reading comprehension. Humans do it, slowly. Pattern matchers don't do it at all.

That is the actual gap.

AI isn't just faster than scanners. It does a different kind of thinking, roughly the same kind of thinking a senior auditor does, at a thousand times the throughput.

The capability on display in GTIG's report, finding semantic logic flaws and chaining them into exploit primitives, is not new. The benchmarks and research demos have existed for over a year. What changed is in-the-wild attribution. Threat actors have started using the capability the research community has been demonstrating.

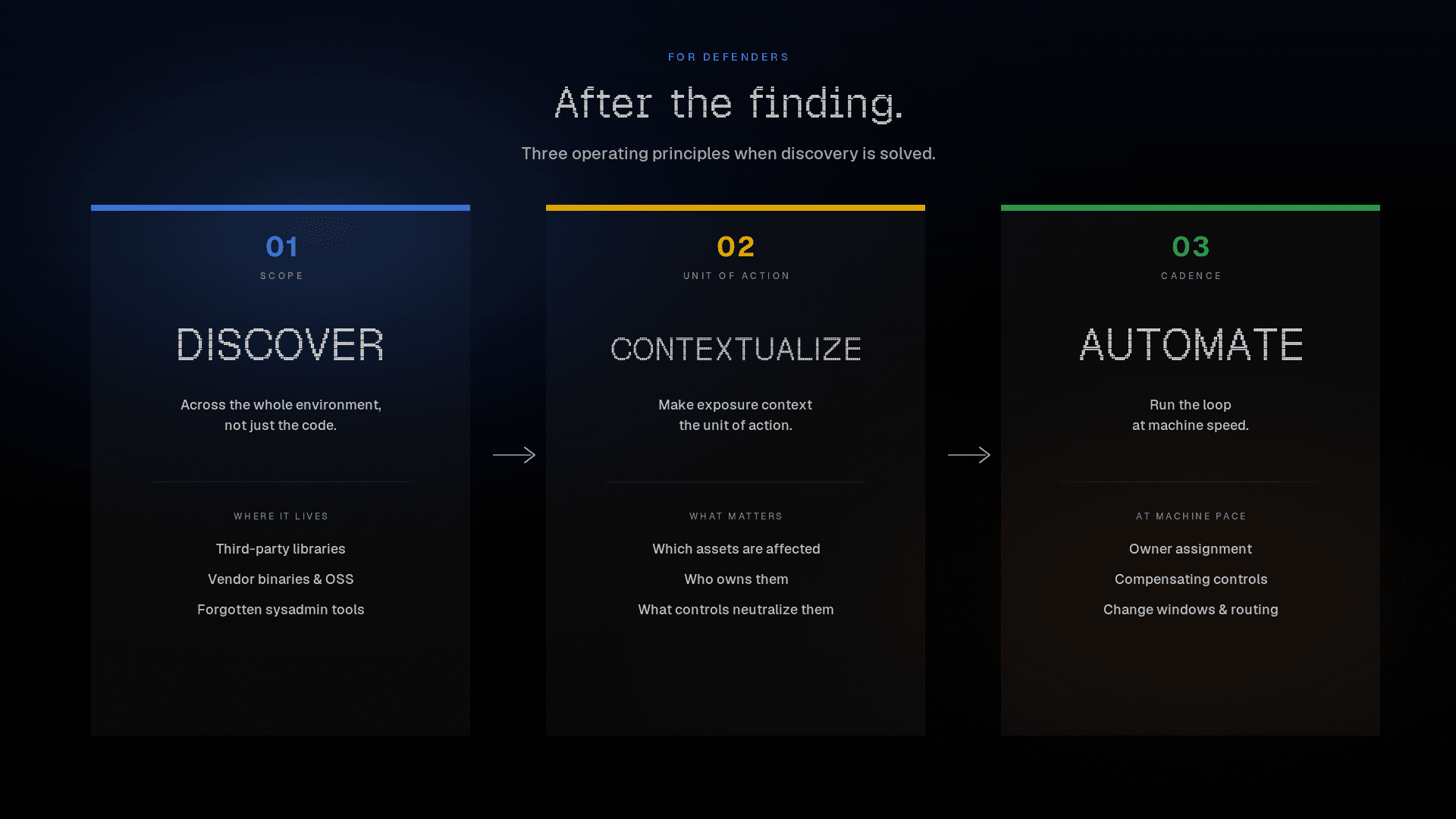

The consequences for defenders are straightforward:

Operate across the whole environment, not just the code. The dormant logic flaws AI now finds aren't just in code your team wrote. Third-party libraries, vendor binaries, OSS dependencies, and forgotten sysadmin tools all carry them.

Make exposure context the unit of action. Knowing a CVE exists isn't enough. What matters is which assets in your environment are actually affected, who owns them, and what compensating controls already neutralize the exposure.

Run the loop at machine speed. Patching alone can't keep pace with disclosure. Owner assignment, change windows, compensating control deployment, ticket routing - when the volume of high-priority findings spikes, all of that has to operate on machine cadence.

GTIG's report puts public attribution on a capability the research community demonstrated last year. Threat actors have caught up. Discovery just stopped being the constraint. What happens after a finding lands is the new fight, and it is the part Cogent was built to address.