May 13, 2026

The Question Mythos and Daybreak Don't Answer

Alexander Semien, Head of Growth, Cogent Security

OpenAI shipped Daybreak on Sunday, a frontier-model harness built on GPT-5.5 and Codex. Pointed at a code repository, it produces an editable threat model, runs candidate vulnerabilities in an isolated environment, and proposes fixes. My initial reaction was that frontier models have been able to do this for over a year, and what OpenAI actually shipped is a domain-specific harness with distinct marketing around it.

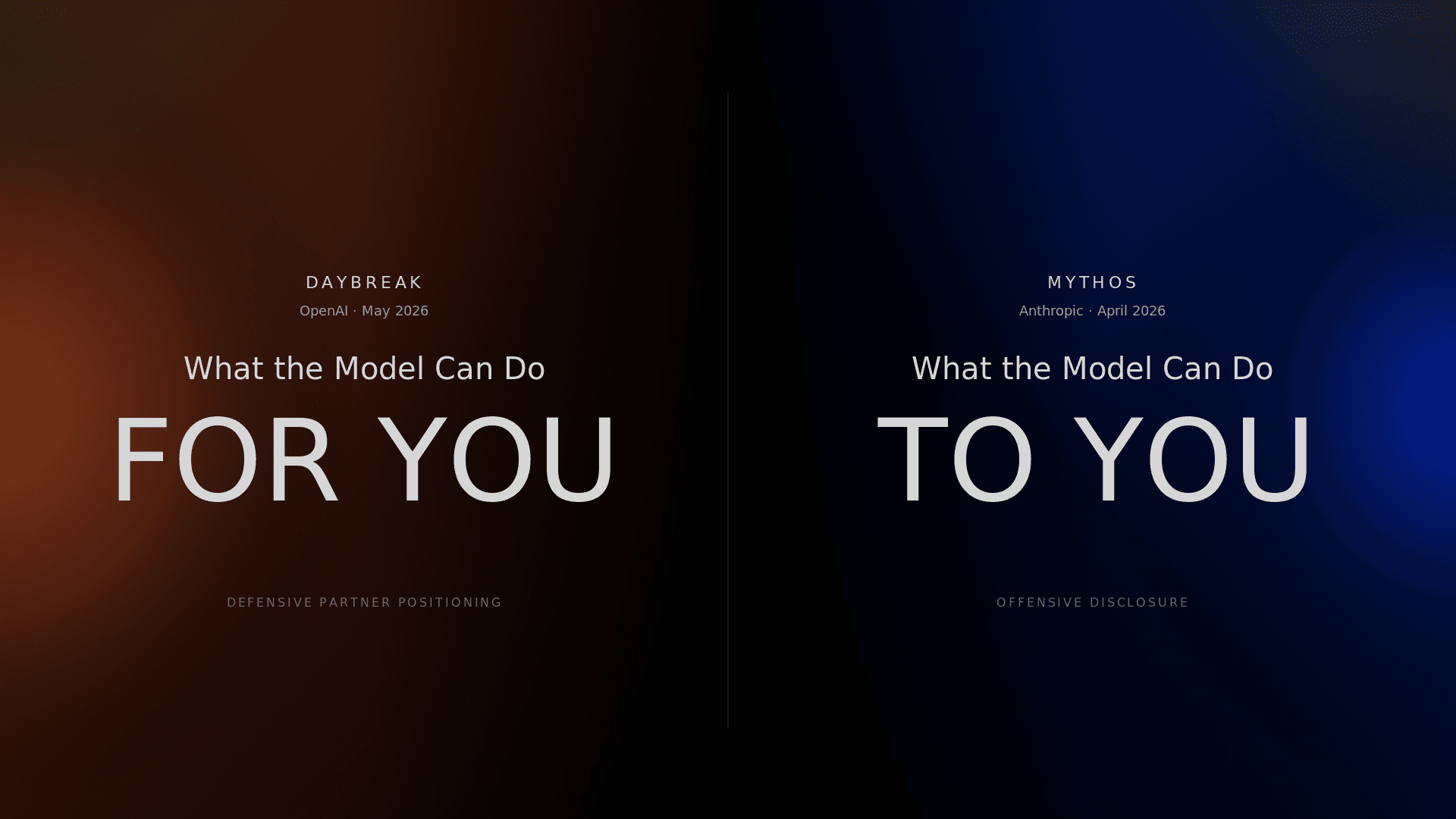

That marketing is anchored with launch partners - Akamai, Cisco, Cloudflare, CrowdStrike, Fortinet, Oracle, Palo Alto Networks, and Zscaler - eight of the biggest names in cybersecurity lined up for day one, with a press cycle to match. Daybreak isn't really a cybersecurity product launch so much as a positioning play: OpenAI claiming the role of premier AI partner to cybersecurity, oriented around defense and distributed through the established security stack, with a pitch that boils down to what the model can do for you.

Anthropic took the opposite stance with Mythos: an offensive framing. A research disclosure that Claude could find, chain, and exploit zero-day vulnerabilities at machine speed. The quiet, direct engagement with banks, federal agencies, and major American companies came alongside that disclosure as the responsible-release wrap on an offensive capability, not a defensive product play. The pitch boils down to what the model could do to you. Same kind of product underneath, two opposite postures.

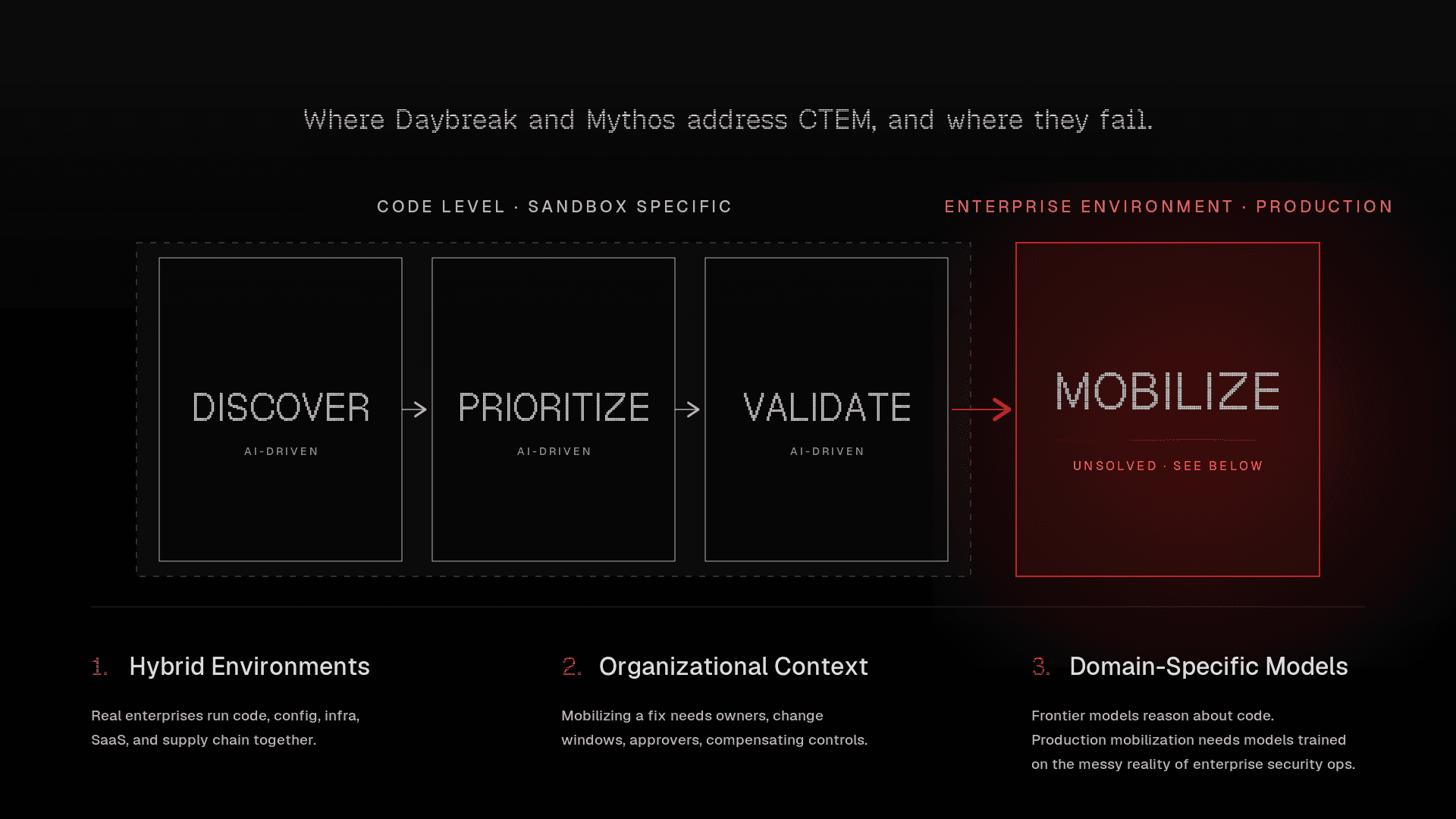

Beneath the marketing, both products are doing the same job: pointing a frontier-model harness at an exploitable surface, predicated on what an attacker would actually try rather than on enumerating CVEs against an asset list. This is the same problem an entire generation of vulnerability vendors has been solving for two decades, albeit on a different time frame. Vulnerability scanners, AppSec tools, cloud security platforms - they all do versions of this work, and now Mythos and Daybreak do it at frontier-model speed. The job has the same shape regardless of who's running it: discover what's wrong, prioritize what matters. OpenAI claims Daybreak can "proposes fixes" too - but we all know that generating a patch in a sandbox is a long way from shipping one in production. The harder problem has always been the one that comes after.

And that's the question the marketing isn't really wrestling with. If AI has now made discovery trivial for both attackers and defenders, what do you actually do? How do you take an enterprise environment - with all the messy reality of vendor software, third-party SaaS, infrastructure drift, ownership gaps, and a constantly shifting attack surface - and move it toward a safer posture? How do you turn an exposure into a closed loop, on the time scale an AI-enabled adversary actually operates on? That's the question we built Cogent to answer.

Watch what the labs are fighting over, and watch what they aren't. Daybreak and Mythos are competing for the discovery-and-prioritization seat, which has been a real seat at the security industry's table for decades. The seat that's going to matter more is the one neither lab has stepped into: turning AI-driven exposure into action, across the whole environment, in time to matter. That's the seat we're built for.